I’m shutting down Aux and Poison Pill. Two products, two very different ideas, but ultimately the same wall.

Aux was an AI-powered sample generation library. I built and trained a custom model as part of my Computer Science degree at the University of London Goldsmiths, and the vision was straightforward: give producers and musicians access to high-quality, original samples generated on demand. No digging through sample packs, no licensing headaches; just describe what you want and get something usable.

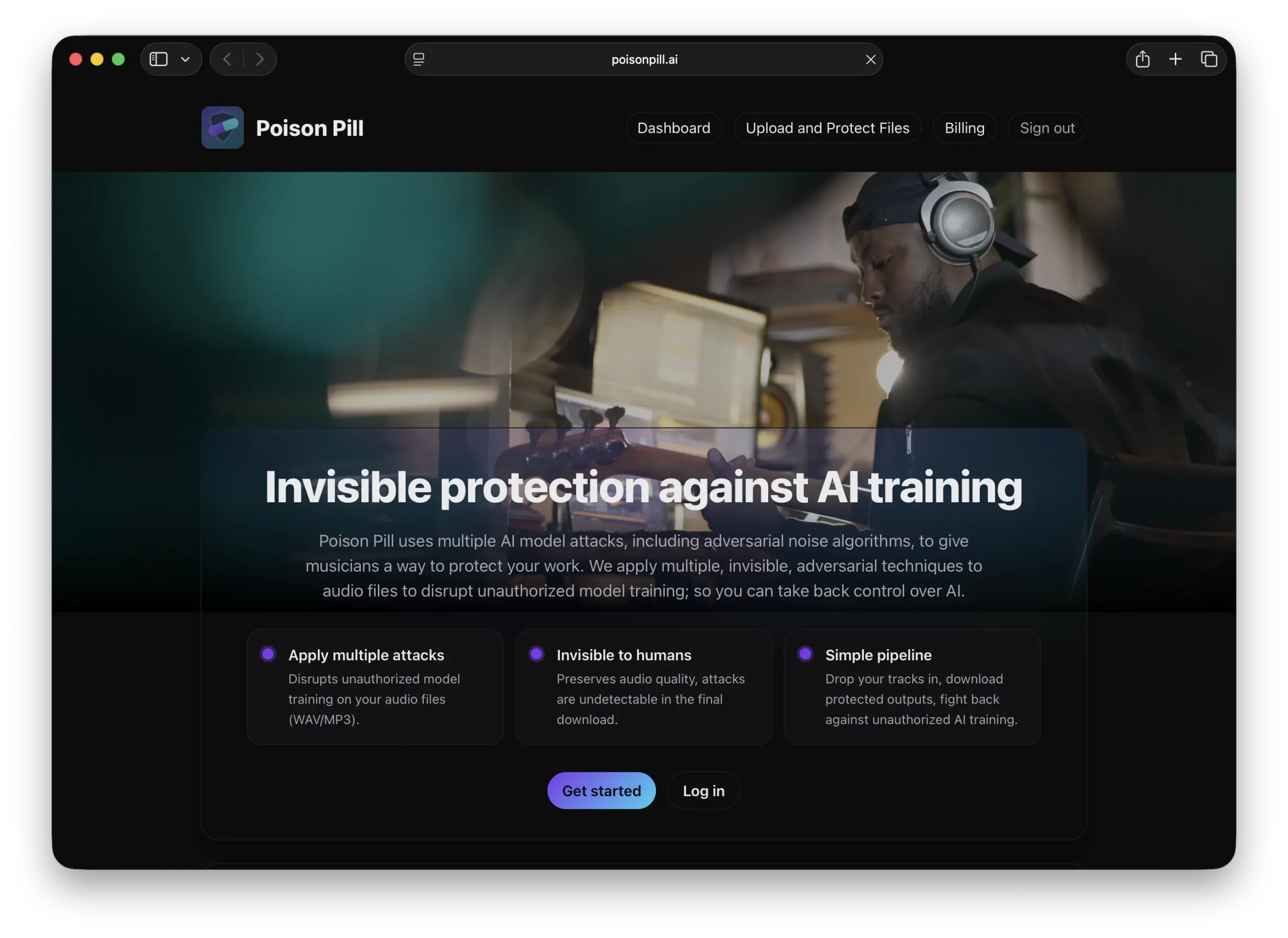

Poison Pill was the opposite side of the same coin. An anti-AI tool, designed to protect musicians’ work from being scraped and used to train generative models without their consent. The idea was to apply adversarial perturbations to audio; subtle, inaudible changes that would disrupt an AI model’s ability to learn from the track.

One product creates with AI. The other defends against it. Both felt important. And both ran into the exact same problem.

The Licensing Problem

For Aux to work properly, to generate samples that were genuinely useful across genres and styles, the model needed to be trained on a large, diverse dataset of music. Not just any music, but properly licensed music. That means licensing deals. Potentially with major labels. The kind of multi-year, resource-intensive negotiation process that’s a full-time job in itself.

I knew this going in, but I thought I could start small and build up. The reality is that “start small” in music licensing still means lawyers, contracts, and conversations that move at a pace measured in quarters, not weeks.

With Poison Pill, I initially found an approach that didn’t require training on existing music at all, an adversarial attack that could be applied without a reference dataset. The problem was that it wasn’t generalisable. It worked against specific models but fell apart when tested against a broader range of AI systems. A defence that only works against one attacker isn’t much of a defence.

The technique that did generalise, general adversarial perturbations, required exactly what I was trying to avoid: training on a large dataset of music. Which brought me right back to the same licensing conversation I was already struggling with on the Aux side.

The Irony That Killed It

Here’s the part that really got to me.

Musicians, understandably, have a deeply negative opinion of AI technology right now. They’ve watched generative models get trained on their work without permission or compensation. They’ve seen AI-generated tracks flood streaming platforms.

So when I approached musicians about licensing their music for Aux, an AI tool, however useful, the answer was almost always no. I get it. Why would you hand over your work to train yet another AI system, even one that’s trying to help you?

But the real kicker was Poison Pill. Here’s a tool explicitly designed to protect musicians from AI. It’s on their side. And yet the conversation still went: “To build this defence system, I need to license your music to train it.” The look on people’s faces when I explained that was enough. You’re asking musicians who are angry about AI training on their work to… let you train on their work. The fact that the end goal is protective doesn’t change the visceral reaction to that ask.

I got tired of that conversation very quickly. Not because the musicians were wrong, they weren’t, but because I realised I was trying to solve a problem where the solution required the very thing the people I was trying to help were most opposed to.

Knowing When to Walk Away

I could have pushed through. I could have spent the next two years building licensing relationships, negotiating deals, and slowly assembling the datasets both products needed. But I’ve been through that process before with previous companies, and I know what it costs; not just in money, but in time, focus, and energy. I wasn’t willing to go through it again. Not for these products, not at this stage.

That might sound like giving up. But I think there’s a difference between giving up and choosing not to re-enter a game you’ve already played. I know what a multi-year licensing grind looks like. I know how it eats into everything else. And I have other products, Direct and Podtastic, that don’t have this particular bottleneck and are closer to where I want to be.

The music industry’s relationship with AI is going to evolve. Licensing frameworks will mature. Someone will figure out the right model for training AI systems on music in a way that’s fair and transparent. But that someone doesn’t have to be me, and it doesn’t have to be now.

Aux and Poison Pill taught me something I probably should have seen earlier: sometimes the biggest obstacle isn’t technical. It’s not even commercial. It’s structural. When the thing you need to build the product is the thing your users are least willing to give you.

I’ll have more to share about what I’m focusing on next, stay tuned for next week’s post.